_edited.png)

Design Thinking Process

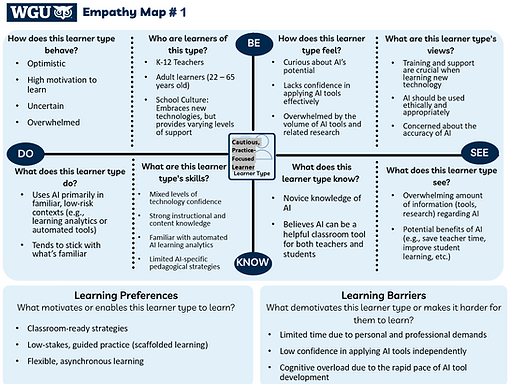

This page highlights how I applied the design thinking framework to develop the Testing AI Feedback & Assessment Tools module, demonstrating how research, empathy, and instructional design principles informed key decisions.

Empathize

Empathy Map

How Information Was Gathered

To inform the learner analysis and empathize phase, I drew on:

-

Scholarly research examining K–12 teachers’ attitudes, perceptions, and AI self-efficacy

-

Informal conversations with practicing K–12 teachers

Key Findings

-

Teachers generally view AI as having strong potential in education.

-

AI self-efficacy varies widely, often depending on prior experience and available support.

-

Teachers want practical, professional learning focused on how to use AI tools effectively.

Design Implications

These insights informed the creation of an empathy map and teacher persona that represent common confidence gaps, instructional needs, and motivations. This grounding ensured the module design reflects authentic educator experiences and supports guided, classroom-ready application.

How the Problem Was Defined

Insights from the empathy map, teacher persona, and prior research were synthesized to identify the instructional problem.

-

The empathy map revealed feelings of uncertainty, overwhelm, and a need for structured support when using AI tools.

-

The teacher persona reflected an experienced educator with strong instructional skills but low confidence with AI tools.

Key Findings

-

Current state: Teachers report low confidence using AI-based feedback and assessment tools in classroom contexts.

-

Desired state: Teachers feel confident and prepared to use AI-based feedback and assessment tools with practical, classroom-ready strategies.

-

A clear confidence and application gap exists between teachers’ interest in AI and their ability to use it effectively.

Instructional Problem

K–12 teachers who lack confidence in using AI need practical guidance to effectively incorporate AI-based feedback and assessment tools into their classroom practice.

Define

Persona

Ideate

Mind Map

Guided Self-Directed Exploration

How Solutions Were Identified

A structured ideation process was used to explore instructional solutions that could support teacher confidence and practical application of AI-based feedback and assessment tools.

-

Divergent and convergent thinking were used to generate and refine multiple design approaches.

-

A mind map supported brainstorming across varying levels of instructional support and learner autonomy.

-

Potential solutions were evaluated based on alignment to teacher needs, confidence-building, and classroom application.

Potential Solutions Considered

-

Demonstration-based guided practice: Step-by-step video modeling with scaffolded practice for novice users

-

Case-based guided practice: Analysis of realistic classroom scenarios using sample student work and AI-generated feedback

-

Guided self-directed exploration: Structured prompts and resources combined with learner choice and hands-on tool exploration

Solution

A guided self-directed exploration model was selected to balance instructional support, learner autonomy, and immediate classroom application.

How the Solution Was Prototyped

A low-fidelity prototype was created to plan the structure, flow, and learner experience of the Canvas-based professional learning module before full development.

-

An outline was developed to map how learning objectives connect to module sections, activities, and assessments, supporting alignment and instructional coherence.

-

Pages were designed with a clear, repeatable layout, including How to Use This Page and What’s Next sections.

-

The prototype supported early testing of learner flow and instructional sequencing across the module.

Design Implications

The solution was intentionally designed with accessibility, usability, and cognitive load in mind to support an inclusive and effective professional learning experience.

-

Accessibility: Consistent headings, readable text, and structured layouts support diverse learner needs and equitable access

-

User Interface & Navigation: Predictable page structure and clear labels reduce friction and support intuitive navigation within Canvas

-

Cognitive Load: Content is chunked into focused sections with guided prompts to support attention and prevent learner uncertainty

Prototype

Low-fidelity Prototype

Test

Post-Module Guided Practice Experience Survey Results

Pre- and Post-Module Confidence Survey Results

How the Solution Was Tested

The module was implemented with participants to evaluate both learner experience and learner outcomes related to guided practice with AI-based feedback and assessment tools.

-

Learner experience was examined through a post-module survey focused on participants’ perceptions of how the guided practice activities supported their understanding and learning of AI-based feedback and assessment tools. Results showed consistently positive ratings across all items, with mean scores at or above 4.5 out of 5, indicating that participants found the guided practice activities clear, supportive, and effective for learning.

-

Learner outcomes were evaluated using pre- and post-module confidence surveys. Average confidence increased from 2.50 before the module to 4.19 after completion, with gains observed across all confidence measures. Participants reported feeling more informed and better prepared to independently use AI-based feedback and assessment tools following the guided practice experience.

Design Implications

Findings from the testing phase indicate that guided practice is an effective instructional strategy for supporting novice AI users’ confidence and learning experience. Participant feedback from the data collection instruments, along with supplemental performance data, suggest that explicit step-by-step guidance, structured reflection, and curated support resources contributed to positive learning outcomes. These findings have implications for both educational practice and future research, as they highlight guided practice as a promising approach for supporting teacher learning with emerging technologies.